Cluster monitoring with Grafana#

Overview#

A Grafana dashboard lets you visualize, query, and explore your system’s metrics and enables you to access your system logs and traces. Cerebras offers you two Cerebras-tailored Grafana Dashboards: Cluster Management Dashboard and WsJob Dashboard.

Grafana dashboards provide a powerful platform to visualize, query, and explore system metrics, while also offering access to system logs and traces. Cerebras offers two customized Grafana Dashboards tailored to their hardware and cluster management needs:

Cluster Management dashboard This dashboard is designed to help users and administrators visualize and manage the Cerebras Wafer-Scale cluster. The WsJob dashboard is specialized for monitoring and managing individual jobs running on the Cerebras Wafer-Scale cluster.

Cluster Management Dashboard#

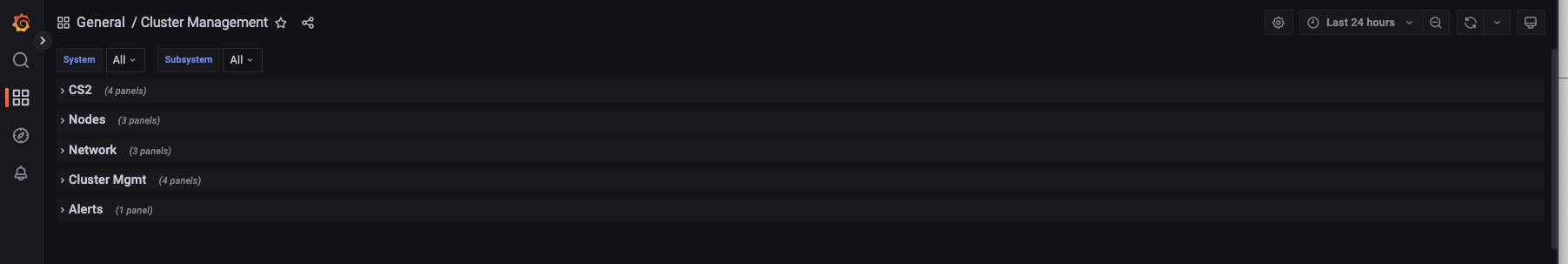

The Cluster Management dashboard shows the overall state of the cluster. It includes the following:

CS-2 systems - Overall CS-2 system status and errors

Nodes:

Kubernetes nodes warnings and errors

Space usage health

Network

Hardware NIC errors

Kubernetes CNIs errors

The following figure displays Cerebras’s Wafer-scale cluster management dashboard:

WsJob Dashboard#

The WsJob Dashboard provides job-level metrics, logs, and traces, allowing users to closely monitor the progress and resource utilization of their specific jobs.

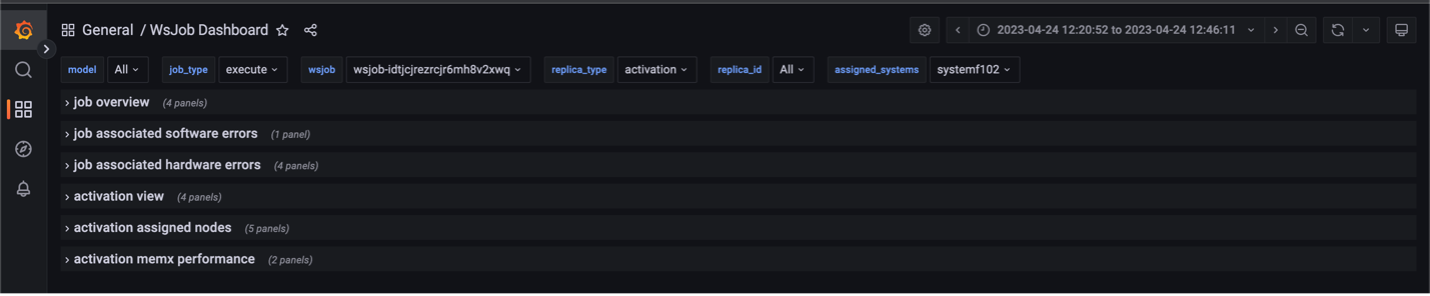

There are five panes in this dashboard:

Job overview

Displays the overview of memory/cpu/network bandwidth numbers for all replicas of selected job

Job associated software errors

Displays job runtime errors (currently only shows

OOMKilledstatus)

Job associated hardware errors

Displays any NIC, CS-2, or physical node that is assigned to this job and is having errors during the job execution

Replica view

Displays memory/cpu/network bandwidth numbers for each

replica_idof thisreplica_typein each chart.Replica_typerepresents a type of service processes for a given job. It can be one of these types: weight, command, activation, broadcastreduce, chief, worker, coordinator.Replica_idcorresponds to the specific replica for a job and a replica type

Assigned nodes

Displays physical nodes status that are assigned to the chosen

replica_typeandreplica_id

MemX performance

Shows iteration-based performance, iteration time, cross-iteration time, and backward iteration time

The following figure displays Cerebras’s WsJob dashboard:

On the left you can find options to search for particular metrics and view metric details.

There are also filters for users to select:

wsjob

Indicates the ID of the weight-streaming run, which is used to select between different runs on a particular system

replica_type

Allows selecting between the activation, weight, and all server metrics

assigned_systems

Indicates the system name being shown in the logs

Other fields available that are useful are the model, job_type, and the replica_id.

Prerequisites#

You have access to the user node in the Cerebras Wafer-Scale cluster. Contact your sys admin if you face any issues in the system configuration.

You can run a port-forwarding SSH session through the user node from your machine with this command:

$ ssh -L 8443:grafana.<cluster-name>.<domain>.com:443 myUser@usernodeNote

This command uses the local port

8443to forward the traffic. You can choose any unoccupied port on your machine.

How to get access?#

Contact Cerebras Support team if you need any assistance obtaining access to Grafana.

Viewing performance metrics using the WsJob dashboard#

You can view cluster iteration-performance metrics by tracking update times across the weight servers.

Our current dashboard implementation shows iteration time, forward-iteration time, backward-iteration time, cross-iteration time, and input starvation.

Iteration time

Indicates the time from the end of iteration “i-1” on the weight servers to the end of iteration

ion the weight servers.Forward-iteration time

Indicates the time spent in iteration “i” during the forward pass.

Backward-iteration time

Indicates the time spent in iteration “i” during the backward pass.

Cross-iteration time

Indicates the time between the last gradient receive of an iteration to the first weight send. A high value indicates an optimizer performance bottleneck.

Input starvation

Indicates the time spent waiting on the framework to receive activations.

These statistics are shown in the following image and can be used to identify performance bottlenecks in the training process:

Viewing utilization metrics using the WsJob Dashboard#

The Replica view metric displays memory/cpu/network bandwidth numbers for each replica_id of this replica_type

in each chart. Replica_type represents a type of service process for a given job. It can be one of these

types: weight, command, activation, broadcastreduce, chief, worker, and coordinator.

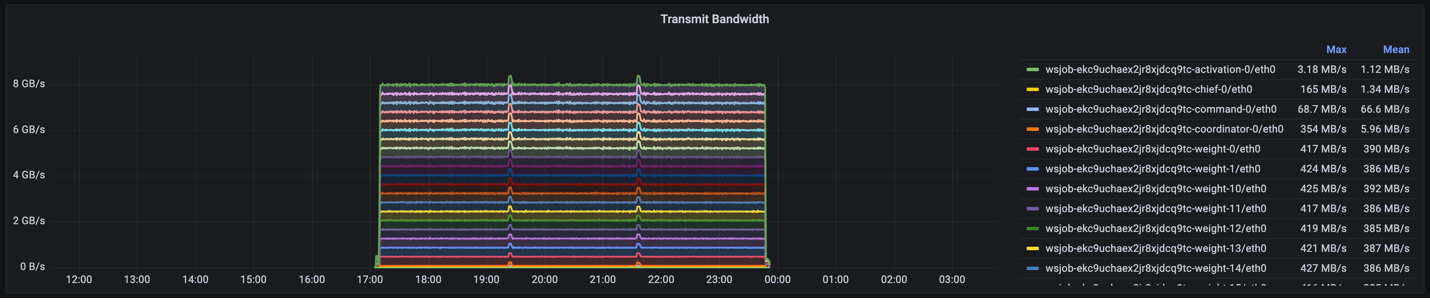

1. Transmit bandwidth indicates the maximum and mean network egress speeds for each activation server. This might be helpful information to monitor whether jobs are network-bound via the transmission speeds of a lagging node.

The following figure shows that most weight servers achieve a network transmit speed of ~420 MB/s:

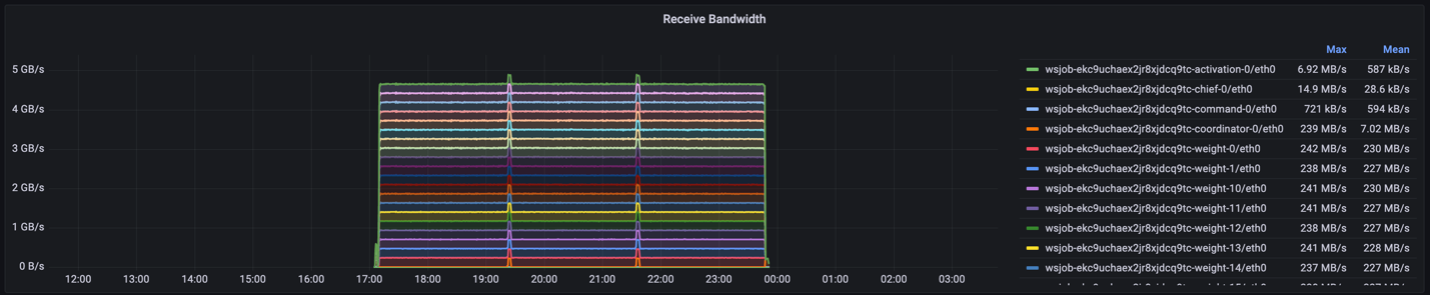

2. Receive bandwidth denotes the ingress speeds for each supporting server. For example, in this instance, the weight servers have an average ingress speed of around 220MB/s.

The following figure shows the receive bandwidth metric:

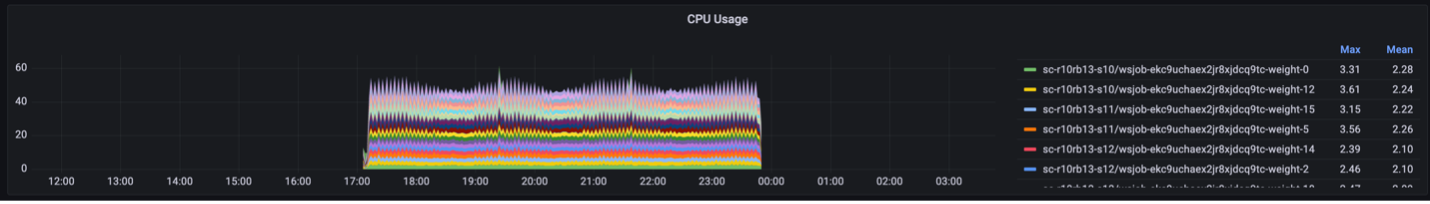

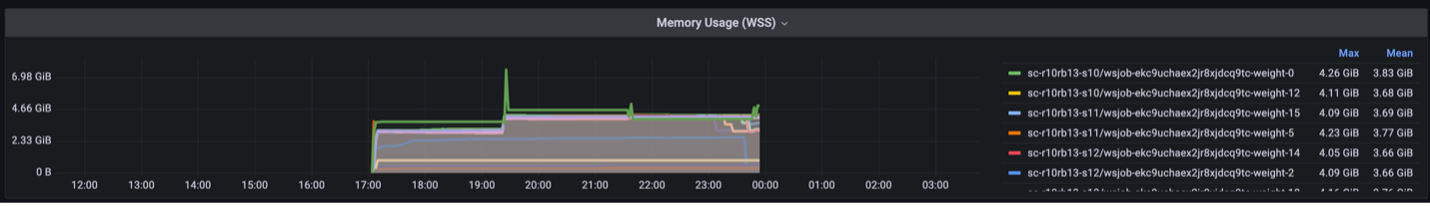

3. CPU usage shows the CPU percentage utilization for each weight-server. In this case, the CPUs are only 2-3% utilized.

The following figure shows the cpu usage metric:

4. Memory usage indicates the maximum and mean amounts of memory each weight server uses over time. This can be useful in debugging whether the weight servers are memory bound. For more information on memory requirements, visit Resource requirements for parallel training and compilation.

The following figure shows the memory usage metric:

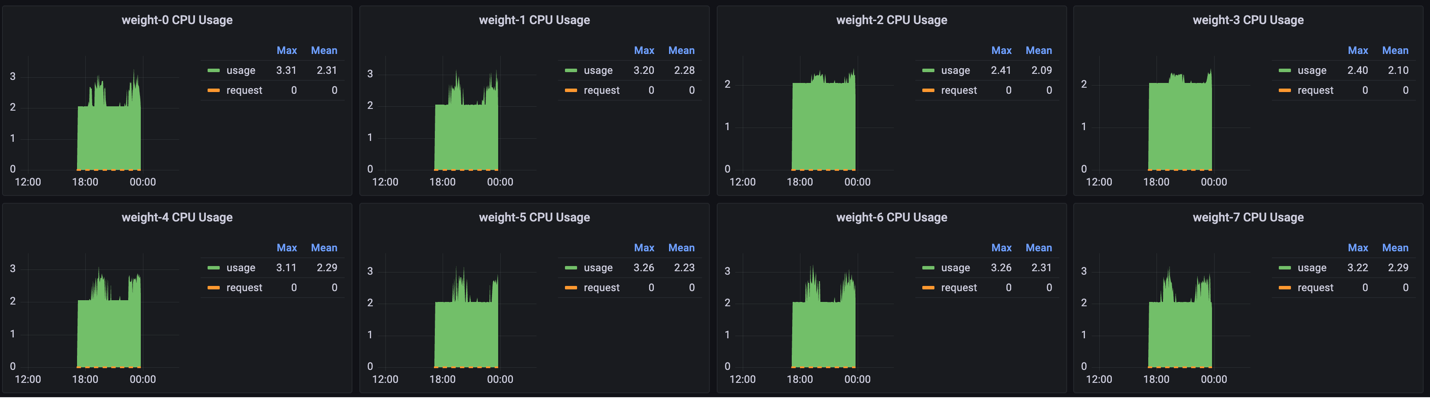

5. You can use the Grafana interface to show individual metrics for each node. For example, these are the views for CPU and memory usage per node:

The following figure shows the cpu usage per node metric:

The following figure shows the memory usage per node metric: